SHAP: The Theoretically Optimal Machine Learning Explanation Algorithm

SHAP (SHapley Additive exPlanations) is a game-theoretic, model-agnostic approach to machine learning interpretability. It can quantify each feature’s contribution to a single prediction while also aggregating local explanations into a global view of the model. SHAP has strong theoretical guarantees and, thanks to substantial engineering optimization, is also practical in real-world workflows. This article introduces the core theory behind SHAP and shows several example visualizations.

Shapley Values

The Shapley value comes from cooperative game theory and was originally proposed as a fair way to divide gains within a team. Imagine a crew of p pirates that wins 100 gold coins after a successful expedition. How should the reward be split fairly? Equal division is clearly unsatisfactory, but dividing by “ability” is not enough either. Pirate A may be the strongest fighter, while Pirate B may be physically weak but the only doctor on the ship.

Any allocation rule should satisfy several conditions:

- Efficiency. Everyone’s contribution scores should sum to 100, so all 100 coins are allocated.

- Symmetry. If two people create the same value, they should receive the same reward.

- Dummy player. If a person is dispensable, that person should receive 0.

- Additivity. Splitting 100 coins should be equivalent to first splitting 40 and then splitting 60.

It can be shown that the Shapley value is the unique solution satisfying these conditions. The contribution score of pirate j is

$$ \phi_j(val)=\sum_{S\subseteq\{1,\ldots,p\} \backslash \{j\}}\frac{|S|!\left(p-|S|-1\right)!}{p!}\left(val\left(S\cup\{j\}\right)-val(S)\right) $$

After removing j, the remaining pirates form a new coalition S, which could contain nobody, or 1, 2, 3, ... , p-1 members. Here val is the value function that measures the payoff of each coalition, with

$$ val(\{1,\dots, p\})=100 $$

The normalization coefficient in the formula gives the probability of observing coalition S when all orders are assumed equally likely:

$$ \frac{|S|!\left(p-|S|-1\right)!}{p!} $$

The term \(val\left(S\cup{j}\right)-val(S)\) measures the extra value created when pirate j joins coalition S.

The SHAP Definition

Consider a linear model \(\hat{f}(x)=\beta_0+\beta_{1}x_{1}+\ldots+\beta_{p}x_{p}\). The effect of feature \(x_j\) on the output clearly depends on its coefficient \(\beta_j\), so the marginal contribution of \(x_j\) is

$$ \phi_j(\hat{f})=\beta_{j}x_j-\beta_{j}E(X_{j}) $$

The total value to be allocated is

$$ \begin{aligned} \sum_{j=1}^{p}\phi_j(\hat{f})=&\sum_{j=1}^p(\beta_{j}x_j-E(\beta_{j}X_{j}))\\=& (\beta_0+\sum_{j=1}^p\beta_{j}x_j)-(\beta_0+\sum_{j=1}^{p}E(\beta_{j}X_{j}))\\=& \hat{f}(x)-E(\hat{f}(X)) \end{aligned} $$

For nonlinear models such as ensemble methods, we no longer have such direct weights, so we need a different formulation. Once we view a machine learning prediction as a cooperative game, the SHAP solution follows naturally. Treating each feature as a team member is intuitive; the key is defining the value function. In SHAP, that value function is

$$ val_{x}(S)=\int\hat{f}(x_{1},\ldots,x_{p})d\mathbb{P}_{x\notin{}S}-E_X(\hat{f}(X)) $$

The model output can then be written as a linear combination of feature contributions, and the SHAP explanation satisfies additivity and efficiency:

$$ \hat{f}(x)=\phi_0+\sum_{j=1}^M\phi_jx_j'=E_X(\hat{f}(X))+\sum_{j=1}^M\phi_j $$

In reality, however, feature effects often interact. Exact SHAP value computation becomes prohibitively expensive, so the original SHAP work also summarizes and proposes several approximate algorithms, both model-agnostic and model-specific.

Applications and Visualization

shap is the open-source implementation of SHAP, with a clean API and excellent visualization support.

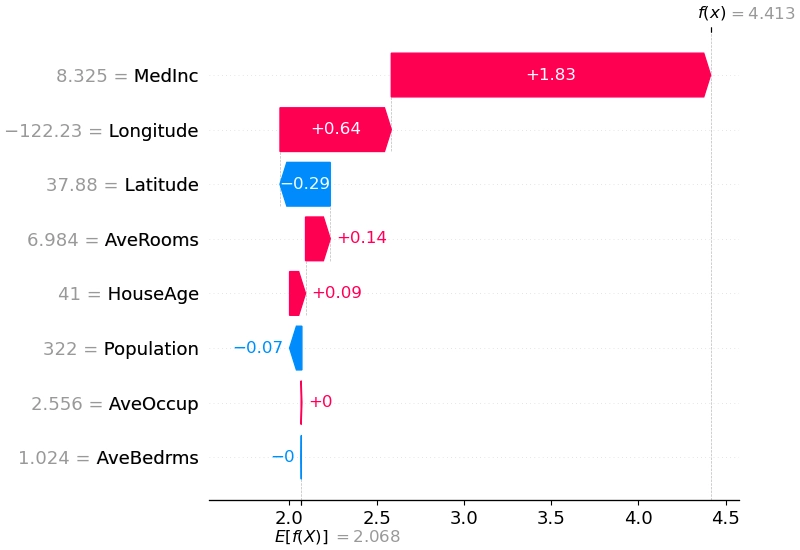

The first use case is explaining a single prediction. Because SHAP satisfies efficiency and additivity, a waterfall plot can show the exact contribution of each feature:

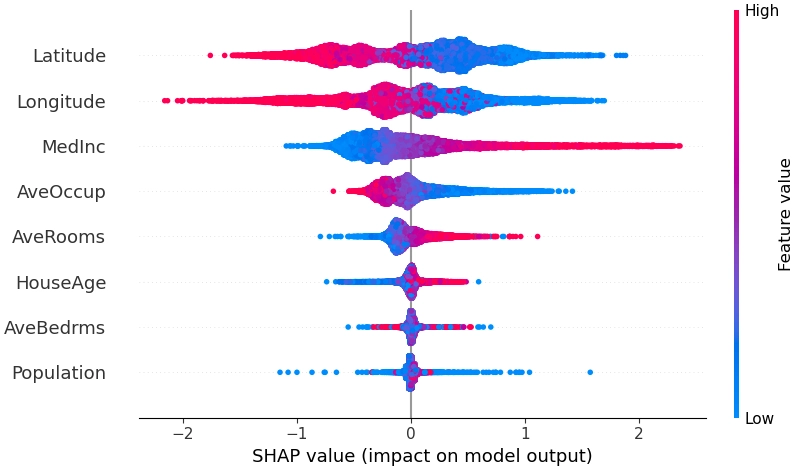

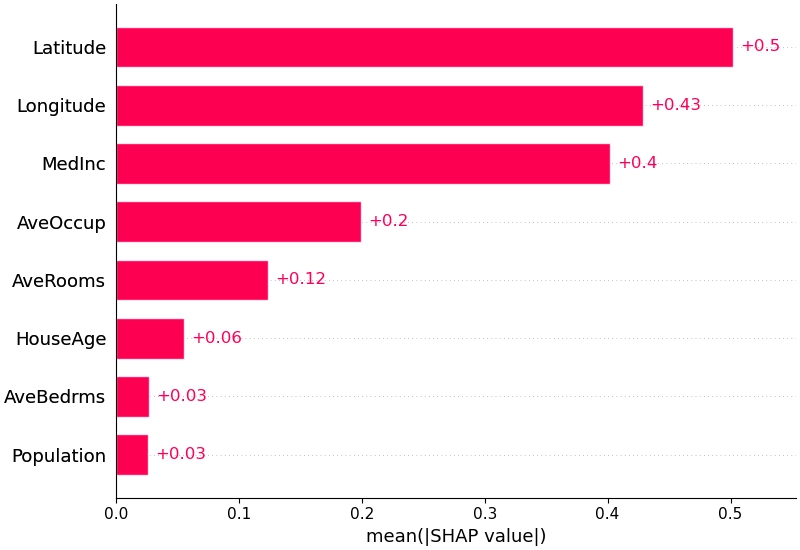

By aggregating SHAP values across samples, we can obtain a global explanation of the model:

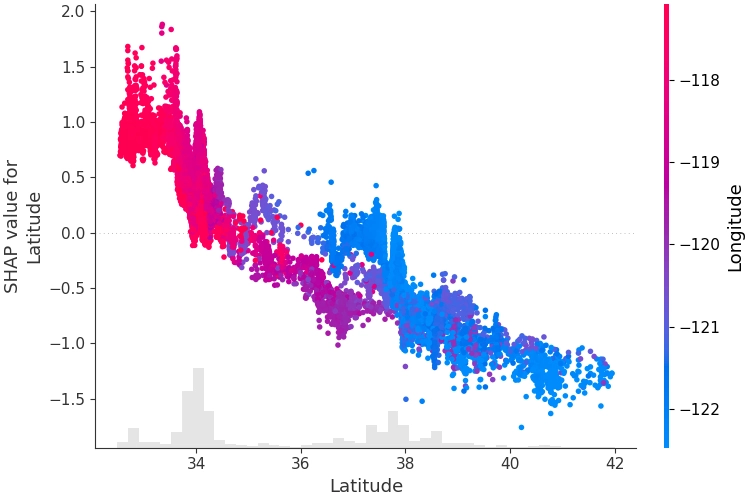

We can also inspect interactions between different features:

References

- Lundberg, S.M., Lee, S.-I.: A Unified Approach to Interpreting Model Predictions. In: Advances in Neural Information Processing Systems. Curran Associates, Inc. (2017).

- interpretable-ml-book SHAP (SHapley Additive exPlanations)

https://christophm.github.io/interpretable-ml-book/shap.html

SHAP: The Theoretically Optimal Machine Learning Explanation Algorithm