Houyi Project Technical Report - How to Win an Intelligent War in Apex Legends

HOUYI is positioned as a general-purpose aiming assistant for electronic shooting games. It currently supports Apex Legends, abbreviated below as apex. Its runtime framework is a two-stage pipeline of detection and tracking: first, object detection is used to identify enemies on the screen, and then the crosshair position is computed and corrected automatically. The mission of HOUYI is to use computer technology to resist the alienation imposed by electronic shooting games.

When nothing is stored within, forms reveal themselves. Its movement is like water, its stillness like a mirror, its response like an echo.

- Zhuangzi, “Under Heaven”

Forms Reveal Themselves

Deep learning algorithms represented by YOLO have excellent performance and mature deployment workflows, making them the mainstream choice for object detection in computer vision today. Houyi uses the PaddlePaddle framework from Baidu to train detection models, and uses Microsoft ONNX Runtime together with Nvidia TensorRT for deployment.

Data Understanding

The main difficulties of object detection in apex are:

- Apex has many playable characters, many skins, and complex visual effects

- Distinguishing enemies from teammates is difficult

- Distant targets, over 30 meters away, occupy very few pixels on screen, which makes this a small-object detection problem

- Real-time inference is required even while mainstream GPUs are under game load

Example from the center region of the screen:

Target region:

Data Collection and Annotation

Overall data pipeline HOUYI uses about 13k manually annotated samples as the initial dataset. After the first model version is trained and put into production, online real-world samples are collected to expand the dataset, enabling collaborative evolution between client and cloud.

Data collection strategy HOUYI implements a real-time uniform sampling strategy for screen images under different states, namely hip fire, ADS firing, and ADS aiming. This reduces information loss across states as much as possible and keeps the training distribution favorable for learning and aligned with actual gameplay.

| Mode | Sampling Region | Sampling Ratio | Compression |

|---|---|---|---|

| Hip Fire | 1080x1080 | 1/100 | Lossless |

| ADS Firing | 640x640 | 1/100 | Lossless |

| ADS Aiming | 640x640 | 1/500 | Lossless |

Feature consistency HOUYI ensures online and offline feature consistency in two ways:

- Integrated deployment and collection. During live use, before each model inference there is a small probability that the image is cached and uploaded to the server after the session ends. In addition, each crosshair correction record is uploaded as reference material for control algorithm research.

- Lossless compression format. PNG is used to guarantee pixel-level consistency.

Automatic annotation HOUYI uses the offline model with the most parameters and highest accuracy to pre-label the online collected samples. After manual inspection and fine-tuning, the samples are added to the training set.

Object Detection Algorithm

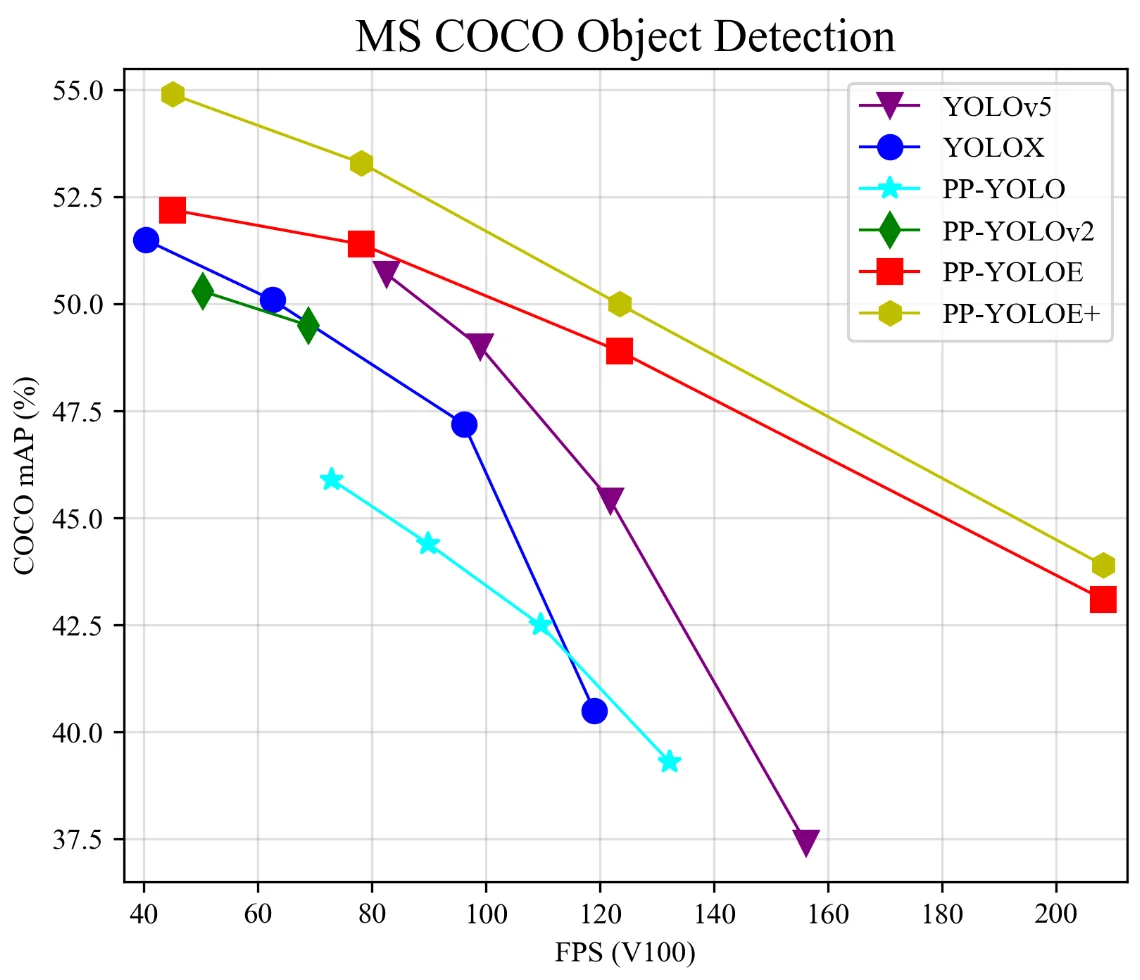

PP-YOLOE+ HOUYI mainly uses PP-YOLOE+ as the online inference model. PP-YOLOE+ is an excellent one-stage anchor-free model based on PP-YOLOv2. Its accuracy-fps tradeoff surpasses many popular YOLO variants. On top of that, PP-YOLOE+ converges in only 80 epochs, which greatly reduces training time and cost compared with the more common 300-epoch setting.

| Model | Epoch | AP0.5:0.95 | AP0.5 | AP0.75 | APsmall | APmedium | APlarge | ARsmall | ARmedium | ARlarge |

|---|---|---|---|---|---|---|---|---|---|---|

| PP-YOLOE+_s | 80 | 43.7 | 60.6 | 47.9 | 26.5 | 47.5 | 59.0 | 46.7 | 71.4 | 81.7 |

| PP-YOLOE+_m | 80 | 49.8 | 67.1 | 54.5 | 31.8 | 53.9 | 66.2 | 53.3 | 75.0 | 84.6 |

| PP-YOLOE+_l | 80 | 52.9 | 70.1 | 57.9 | 35.2 | 57.5 | 69.1 | 56.0 | 77.9 | 86.9 |

| PP-YOLOE+_x | 80 | 54.7 | 72.0 | 59.9 | 37.9 | 59.3 | 70.4 | 57.0 | 78.7 | 87.2 |

Detection examples:

It Moves Like Water

Once a target has been detected on the screen, the next step is to drag the crosshair onto it, which is actually quite difficult. Player input, weapon recoil, and irregular enemy motion all create control error. In addition, server latency, inference latency, and input latency mean that simply moving toward the target may only chase where the enemy used to be. This problem cannot be solved completely by program optimization alone, so a dedicated control algorithm is needed for a better user experience.

PID

Closed-loop control corrects error using feedback from the controlled system. Moving the crosshair can also be viewed as a closed-loop control problem: take the current crosshair position as the origin, then the enemy coordinate at time \(t\) is the error \(e(t)\). If the enemy is currently at \((100, 200)\), then the most naive idea is to move the mouse by \((100, 200)\). But such teleport-like behavior is unnatural and not optimal. A more natural idea is to introduce the most mature and widely used closed-loop control algorithm from continuous systems: PID (proportional-integral-derivative controller).

$$ u(t)=K_{\mathrm{p}} e(t)+K_{\mathrm{i}} \int_{0}^{t} e(\tau) \mathrm{d} \tau+K_{\mathrm{d}} \frac{\mathrm{d} e(t)}{\mathrm{d} t} $$

The first term is the proportional term, the second is the integral term, and the third is the derivative term.The first term is easy to understand: the larger the error, the larger the required correction. But a pure proportional controller quickly runs into trouble. If an enemy moves to the right at a constant speed of 10 and we set \(K_p=0.5\), then the steady state becomes \(e(t)=20, u(t)=10\).

At that point an integral term is needed to eliminate steady-state error. For example, if the accumulated error over the past second is 20 and we set \(K_i=0.25\), then \(u(t)=200.5+200.25=15\), which exceeds the enemy’s movement speed and can reduce the distance to the target. A controller with integral action is called a PI controller.

But if i is too small, convergence is slow. If i is too large, jitter appears and convergence also slows down. The best effect is a slight overshoot:

The final derivative term mainly reduces oscillation during control and mitigates overshoot. Since \(\mathrm{d}e(t)\) is generally negative, the derivative term acts like a brake. It reduces the pulling amplitude in advance and prevents the crosshair from overshooting the target. When PID is tuned properly:

The hard parts of applying PID are:

- The enemy’s deliberately irregular motion during gunfights cannot be predicted by PID, so compensation is limited

- Parameter tuning is difficult, which is the classic headache of PID

- Different shooting scenarios require different PID parameters

PVAR: vector autoregressive proportional control

If I said that the main reason I wanted to go on the Journey to the West was to visit the Women’s Kingdom, and that reaching India just happened along the way, you probably would not believe me either.

- Journey to the West Diary

When I talk about aim assist, what I really want to talk about is time-series forecasting.

In a typical correction process, \(e(t)\) decreases rapidly toward zero at first, and then oscillates left and right because of recoil and other factors. The following table shows time-series data from one aiming sequence. enemy_box and confidence are the enemy bounding box and confidence output by the model. ex and ey are the enemy’s horizontal and vertical distances from the crosshair, and ux and uy are the mouse movement commands output by the controller.

| enemy_box_x1 | enemy_box_y1 | enemy_box_x2 | enemy_box_y2 | confidence | ex | ey | ux | uy | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | 266.21786 | 304.98212 | 289.40607 | 362.9163 | 0.548098 | -42.188035 | 13.94921 | -25.31283 | 8.369531 |

| 1 | 261.20413 | 304.77383 | 285.12802 | 362.66193 | 0.705141 | -46.833925 | 13.71788 | -29.106307 | 8.525386 |

| 2 | 273.99313 | 297.0426 | 297.64008 | 360.8898 | 0.700376 | -34.183395 | 8.9662 | -22.454103 | 5.920422 |

| 3 | 289.20618 | 288.6216 | 315.9204 | 360.6148 | 0.700991 | -17.43671 | 4.6182 | -12.794311 | 3.41447 |

| 4 | 304.0824 | 283.4045 | 331.3549 | 357.4968 | 0.622412 | -2.28135 | 0.45065 | -3.746427 | 0.922879 |

| 5 | 311.96606 | 282.16705 | 340.17767 | 357.0965 | 0.715523 | 6.071865 | -0.368225 | 1.40623 | 0.423019 |

| 6 | 313.57156 | 282.32294 | 341.7551 | 357.35828 | 0.734364 | 7.66333 | -0.15939 | 2.544032 | 0.544513 |

| 7 | 313.0578 | 282.24142 | 341.4103 | 357.54837 | 0.754622 | 7.23405 | -0.105105 | 2.42217 | 0.575116 |

| 8 | 311.94815 | 282.4199 | 340.3355 | 356.5956 | 0.768114 | 6.141825 | -0.49225 | 2.91703 | 0.036619 |

| 9 | 311.10672 | 282.8336 | 339.47678 | 356.11987 | 0.770691 | 5.29175 | -0.523265 | 2.516004 | 0.007234 |

| 10 | 309.4765 | 282.83307 | 337.44662 | 356.76465 | 0.778666 | 3.46156 | -0.20114 | 2.424042 | -0.049513 |

| 11 | 305.26862 | 290.59354 | 332.79498 | 361.52643 | 0.760621 | -0.9682 | 6.059985 | 0.129709 | 3.759036 |

| 12 | 303.50372 | 291.24722 | 333.49023 | 363.27325 | 0.66165 | -1.503025 | 7.260235 | -0.325249 | 4.665564 |

Across thousands of measured aiming records, the median \(e_x\) and \(e_y\) sequences passed the unit-root stationarity test ADFULLER (p<0.0002). Therefore, HOUYI not only corrects the current \(e_t\), but also uses a vector autoregression model, VAR, to predict \(\hat e_{t+1}\).

From the perspective of vector autoregression,

$$ e_{t}=c+A_{1} e_{t-1}+A_{2} e_{t-2}+\cdots+A_{p} e_{t-p} $$

We then inject prior knowledge to adjust the model:- The current error has a linear relationship with the previous movement command \(U_{t-1}\), so a new explanatory variable is introduced

- The error does not contain a constant term

The final model used by HOUYI is

$$ e_{t}=A_{1} e_{t-1}+A_{2} e_{t-2}+\cdots+A_{p} e_{t-p} +A_u U_{t-1} $$

VAR and the proportional controller together form HOUYI’s main control algorithm, PVAR.

It Responds Like An Echo

Performance optimization is both the most important and the most labor-intensive part of HOUYI. Low-latency, high-throughput image processing is the foundation of the final experience.

Screen capture There are many ways to capture the screen, but the most efficient one is DXGI, which uses the GPU to process textures directly. Capture speed is therefore almost limited only by GPU rendering speed. On top of that, HOUYI specifically optimizes memory efficiency for partial capture: only data from the required region is read, and data type conversion is performed only for the target area.

Preprocessing The main performance killer during preprocessing is still memory access efficiency. HOUYI removes all unnecessary memory copies and allocates a fixed region in GPU memory for model input, roughly doubling the number of corrections per second.

Inference engine The model is exported to ONNX and then deployed with Nvidia’s black-tech TensorRT.

The core of NVIDIA TensorRT is a C++ library that facilitates high-performance inference on NVIDIA graphics processing units (GPUs). TensorRT takes a trained network, which consists of a network definition and a set of trained parameters, and produces a highly optimized runtime engine that performs inference for that network. TensorRT provides API’s via C++ and Python that help to express deep learning models via the Network Definition API or load a pre-defined model via the parsers that allow TensorRT to optimize and run them on an NVIDIA GPU. TensorRT applies graph optimizations, layer fusion, among other optimizations, while also finding the fastest implementation of that model leveraging a diverse collection of highly optimized kernels. TensorRT also supplies a runtime that you can use to execute this network on all of NVIDIA’s GPUs from the Kepler generation onwards. TensorRT also includes optional high speed mixed precision capabilities introduced in the Tegra X1, and extended with the Pascal, Volta, Turing, and NVIDIA Ampere GPU architectures.

It is worth mentioning that TensorRT performs hardware-specific optimization for different GPUs, so the first startup requires about 15 minutes to rebuild the model runtime. In this application scenario, that cost is negligible.

Postscript

If you want to use cheats for the pleasure of casually deleting other players, do not use Houyi;

If you do not approve of using external power to break through human limits, do not use Houyi;

If you think it is cool to use algorithms and compute to surpass human limits when processing the same input data, and if you want to use computer technology to resist the alienation imposed by electronic shooting games, then may the Houyi Project help you win an intelligent war.

References

Houyi Project Technical Report - How to Win an Intelligent War in Apex Legends