Portfolio Optimization for Long-Only Multi-Factor Equity Strategies

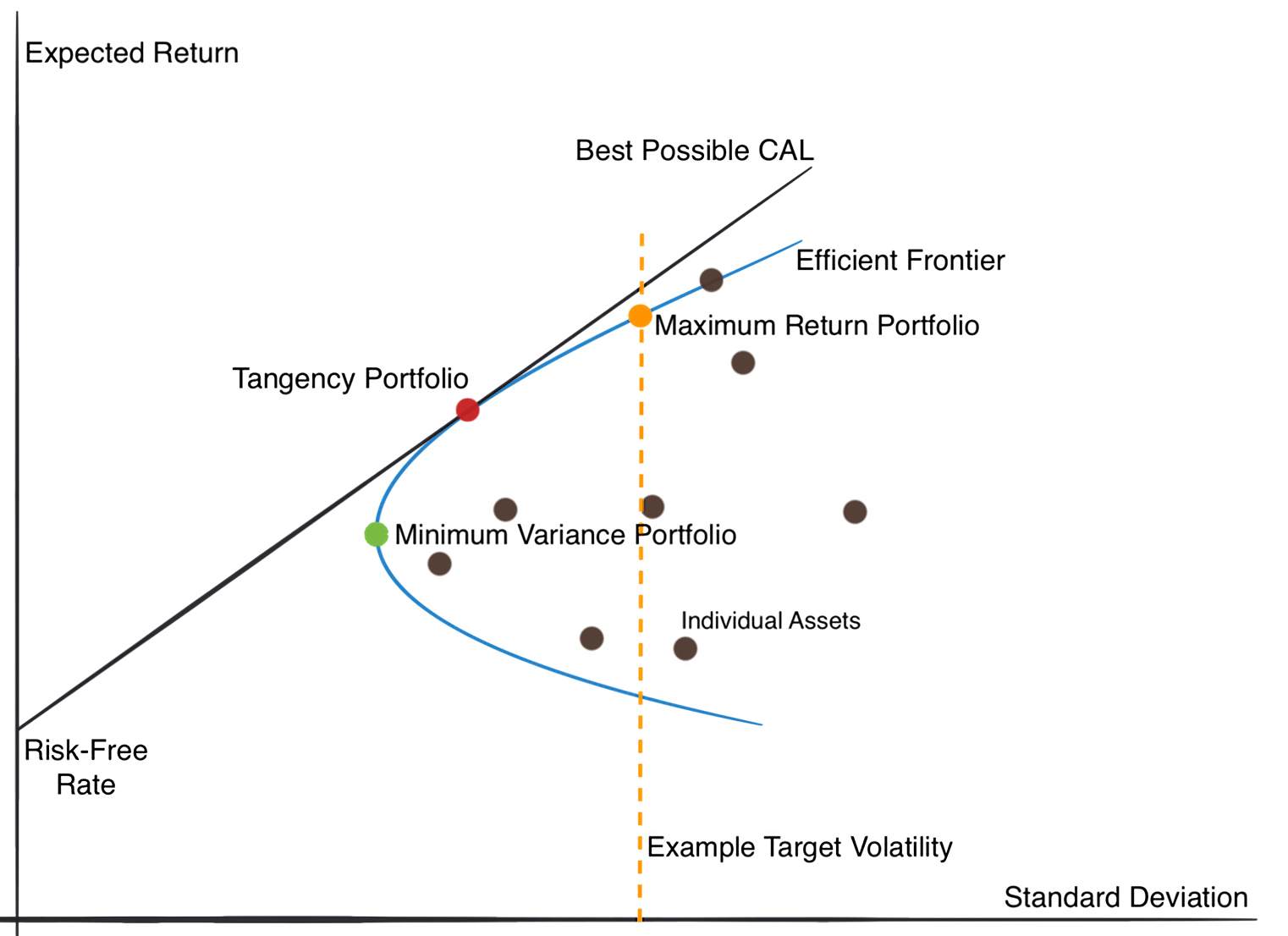

Quantitative investment strategies often rely on mathematical programming to determine portfolio weights. One reason is to balance objectives such as return and risk more scientifically; another is to use optimization as the layer where subjective and objective constraints can be introduced in a unified way. This article focuses on short-horizon, long-only equity alpha strategies built on multi-factor theory, and reviews practical portfolio optimization methods. It also summarizes mainstream approaches for forecasting the key inputs required by optimization: expected return, risk, and transaction cost.

Portfolio Optimization Framework

Objective and Constraints

Mean-variance optimization (MVO) is the most widely used optimization framework:

$$ \max_w w^T \mu - \frac{\gamma}{2} w^T \Sigma w $$

On top of that, adding a linear penalty on turnover leads to the objective used in this article:

$$ \begin{aligned} {maximize} \quad & w^T \mu - \gamma \left(f^T \Sigma_{\lambda} f + w^TDw \right) - c^T |w - w_0| \\ {subject \enspace to} & \quad {\bf 1}^T w = 1\\ & \quad 0 \le w \le w_{max} \\ & \quad f = \beta^Tw \\ & \quad |f| \le f_{max} \\ \end{aligned} $$

Here \(\mu\) is the forecast of next-day expected return, provided by the return model. The second term is portfolio risk after factor-model decomposition, where \(\gamma\) is the risk-aversion coefficient. A detailed discussion can be found in Risk Models for Alpha Strategies. In equity markets there may be thousands of candidate assets, which means the covariance matrix can easily grow to tens of millions of entries. Factor models reduce dimensionality dramatically and simplify both estimation and computation. The last term is a linear turnover penalty, where c is either estimated by a transaction-cost model or set as a hyperparameter. It can also be replaced by a quadratic penalty.

The constraints here are intentionally kept simple to maximize alpha realization. The portfolio is constrained to be fully invested and long-only, with each stock capped at \(w_{max}\) and factor exposure capped by \(f_{max}\). It can be shown that a maximum single-name weight limit is equivalent to compressing expected returns, which helps mitigate large return-forecast errors. Since factor covariance estimates are themselves imperfect, the portfolio also constrains absolute style exposure. Compared with the more heavily constrained industry standard of enhanced-index optimization, this setup removes tracking-error constraints because it does not benchmark to an index. That simplifies the problem to a quadratic program. In addition, market-cap and industry exposure are not constrained directly in the optimizer; instead they are controlled implicitly through the risk model, which avoids infeasibility. That point is discussed further in the risk section.

Implementation

Using cvxpy as an example, the optimization problem can be written as:

1 | import numpy as np |

In terms of compute performance, factor risk models improve the speed of full-market stock-selection optimization by roughly two to three orders of magnitude. For backtesting, they are close to mandatory. Most of the remaining implementation details matter far less. As for solvers, this is a relatively simple QP problem and many choices work. I benchmarked four commonly used solvers:

| Time | Multiple | |

|---|---|---|

| Mosek | 159 ms ± 319 µs | 1 |

| ECOS | 375 ms ± 2.79 ms | 2.35 |

| OSQP | 2050 ms ± 27.6 ms | 12.89 |

| CLARABEL | 280 ms ± 4.9 ms | 1.76 |

Commercial solvers are noticeably faster than open-source ones. Among open-source solvers, Clarabel performed best and was not far from Mosek, which also explains why it became cvxpy‘s new default.

Return Forecasting

From the simplest supervised-learning perspective, return prediction means estimating the expected return \(E[r_{i,t}|X_{i,t}]\) given return-predictive variables \(X_{i,t}\). In practice, this is extremely difficult. First, because the market environment, investor sentiment, and regulation all change over time, the mapping from features to expected return, \(f_t:X_t \mapsto R_t\), is time-varying: \(f_t \ne f_{t+1}\). This is a form of concept drift. To address it, practitioners use techniques such as model ensembling and periodic retraining, while also trying to remove systematic effects from both features and labels, for example by modeling excess return rather than raw return. Second, expected stock return is not directly observable even ex post: most of the variance in realized returns is pure noise. Unlike classical machine-learning tasks, financial data has small effective sample sizes and extremely noisy labels, so regularization is essential to prevent overfitting.

In practice, the two dominant approaches to return forecasting are gradient-boosted decision trees (GBDTs) and neural networks, each with different strengths. Tree models are fast, robust to overfitting, and do not require preprocessing such as missing-value imputation or distribution reshaping. On medium-scale tabular data they often outperform neural nets. Neural networks, however, have more flexible architectures and loss functions and complement GBDTs well. GBDTs usually treat one sample as one stock at one date, while neural networks can treat each cross-section as one batch and directly model cross-sectional relationships, for example through CCC loss:

$$ CCC=\frac{2 \rho_{x y} \sigma_{x} \sigma_{y}}{\sigma_{x}^{2}+\sigma_{y}^{2}+\left(\mu_{x}-\mu_{y}\right)^{2}} $$

Frontier discussions in return modeling often focus on graph learning for stock relations and attention mechanisms for time-varying features. Many such papers are tested on very simple factor libraries and remain far from industry practice. In my view they often feel more impressive than useful, though their ideas can still be instructive.

Return prediction in financial markets will inevitably be weak. It is often more useful to treat the return model as a factor synthesizer: a way to combine the prior knowledge embedded in the factor library with whatever predictive signal remains.

Risk Forecasting

The goal of a multi-factor risk model is to approximate the internal structure of stock covariance, that is, common risk plus idiosyncratic risk, and thereby compute portfolio volatility.

Risk Factors

Risk factors represent the drivers behind common stock-price movements. Traditionally these factors are hand-crafted. Market capitalization is a typical example: stocks with similar size often move similarly. Regressing cross-sectional stock returns on risk factors provides a measure of explanatory power.

Hand-crafted expert factors have two obvious weaknesses. First, factor omission is unavoidable, especially for complex nonlinear effects. Second, the factors used in the risk model, \(X_{risk}\), are not aligned with the factors used in the return model, \(X_{ret}\), which can lead to risk mismeasurement during portfolio optimization.

The DRM model addresses this by learning risk factors \(X_{risk}\) with deep learning, reducing manual work while raising the ceiling of the model. One can learn a mapping \(g:X_{ret} \mapsto X_{risk}\) from return predictors to risk factors, and can also incorporate variables such as market cap and industry membership, converting them into a unified latent representation that controls portfolio risk more effectively. By careful loss design, DRM encourages both independence and stability among factors.

Factor Covariance

Once risk-factor exposures are obtained for individual stocks, a cross-sectional regression can estimate factor returns \(\lambda_{i,t}\). Intuitively, this measures how much return one earns at time t from one unit of exposure to factor i. The main job of a risk model is not to forecast directional factor returns, but to make sure factor returns are sufficiently volatile while remaining directionally stable over the long run.

Barra argues that factor-return correlations are relatively stable, so they should be estimated over longer windows, while factor volatilities are more closely tied to recent history. The standard practice is therefore to estimate a time-decay-weighted correlation matrix and a time-decay-weighted variance vector separately, then combine them into the factor return covariance matrix \(\Sigma_\lambda\).

If the risk model is estimated on daily factors while the investment horizon is longer, such as weekly, then one should also account for autocorrelation in factor returns and apply Newey-West adjustments. In this article the portfolio is rebalanced daily, so that complication is ignored.

Since the final goal of risk forecasting is to reduce realized volatility in the optimized portfolio, the model must also be corrected for optimizer bias. Performing an eigen-decomposition on \(\Sigma_\lambda\) gives

$$ \Sigma_\lambda = UDU^T $$

where \(D\) is diagonal and contains eigenvalues, while \(U\) contains eigenvectors. Since

$$ D = U^T \Sigma_\lambda U $$

has exactly the same form as portfolio risk, the eigenvalues in \(D\) can be interpreted as portfolio risks and the columns of \(U\) as the corresponding portfolio weights. Barra calls these eigen-portfolios. By examining the bias statistics of eigen-portfolios with different eigenvalues, one finds that low-volatility portfolios tend to be underestimated while high-volatility portfolios are overestimated. Rescaling the eigenvalues and reconstructing the covariance matrix completes the eigenvalue adjustment, after which the bias across eigen-portfolios is substantially reduced.

Finally, Volatility Regime Adjustment (VRA) uses bias statistics over a time window to determine whether the model is systematically overestimating or underestimating factor risk. Define the cross-sectional bias statistic as

$$ B_{t}^{F}=\sqrt{\frac{1}{K} \sum_{k}\left(\frac{\lambda_{k, t}}{\sigma_{k, t-1}}\right)^{2}} $$

Taking a weighted average over time yields the adjustment coefficient \(\overline{B}=\Sigma_t{w_t B_t}\), which rescales the original factor covariance:

$$ \tilde{\Sigma_\lambda} = \overline{B} \Sigma_\lambda $$

Idiosyncratic Return Volatility

Bayesian shrinkage adjustment Volatility is persistent but also exhibits some reversal: low-volatility stocks often become more volatile later, while high-volatility stocks often cool down. Barra addresses this with Bayesian shrinkage. The idea is to shrink each raw volatility estimate toward a prior, where the prior is the average volatility of stocks in the same market-cap bucket. More generally, stocks can be clustered by other criteria as well, and the idiosyncratic volatility estimate \({\sigma}{i,t}\) can be shrunk toward the cluster mean \(\overline{\sigma_k}\). The shrinkage strength \(v{i,t}\) depends on the distance to the cluster center and the within-cluster variance:

$$ \tilde{\sigma}_{i,t} = (1-v_{i,t}){\sigma}_{i,t} + v_{i,t}\overline{\sigma_k} $$

Grouping stocks by predicted daily volatility shows a monotonic bias pattern before shrinkage: low-volatility groups are underestimated and high-volatility groups are overestimated. If one uses k-means to cluster stocks by style exposure and then shrinks volatility estimates within cluster, the bias statistics move much closer to 1 with no obvious trend.

Volatility regime adjustment for idiosyncratic volatility is conceptually identical to the factor-level VRA above. It asks whether the model is systematically overestimating or underestimating stock-specific risk within a cross-section, then rescales the diagonal matrix of idiosyncratic variance to make covariance estimates respond faster:

$$ B_{t}^{D}=\sqrt{\frac{1}{N} \sum_{n}\left(\frac{u_{n, t}}{\sigma_{n, t-1}}\right)^{2}} $$

Looking at the time series of this bias statistic, VRA makes idiosyncratic volatility forecasts much more responsive and substantially reduces extreme overestimation and underestimation. The statistic fluctuates more tightly around 1.0.

Transaction Cost Forecasting

The strategy considered here is designed for relatively small capital, so the optimizer uses a simple linear turnover penalty, where c can even be treated as a constant:

$$ cost = c^T |w - w_0| $$

Strictly speaking, however, different stocks have different liquidity, so the true transaction cost varies by name. Forecasting transaction cost inside the optimizer should get us closer to the real optimum. The closer one gets to high-frequency trading and execution algorithms, the more secretive the literature becomes. To give a more intuitive picture of transaction cost analysis (TCA), I briefly introduce the I-star market-impact model here.

The I-Star model was first proposed by Kissell and Malamut (1998). It begins by modeling the instantaneous price impact caused by order participation:

$$ I_{b p}^{*}=a_{1} \cdot\left(\frac{Q}{A D V}\right)^{a 2} \cdot \sigma^{a 3} $$

where \(a_1,a_2,a_3\) are parameters, \(Q\) measures market imbalance, \(ADV\) is average daily volume, and \(\sigma\) is daily stock-price volatility. In trading, I-Star represents the theoretical instantaneous impact cost generated if the order is sent to the market. It can also be interpreted as the total payment required to attract additional counterparties, for example the discount a seller must give up in order to complete the order.

Total market impact combines instantaneous impact and permanent price impact:

$$ M I_{b p}=b_{1} \cdot I^{*} \cdot P O V^{a_4}+\left(1-b_{1}\right) \cdot I^{*} $$

where \(POV\) is the participation ratio and \(a_4, b_1\) are model parameters.

Ex post transaction cost can then be measured using VWAP and the arrival price \(P_0\):

$$ Cost = ln(\frac{vwap}{P_0}) \cdot Side $$

Portfolio Optimization for Long-Only Multi-Factor Equity Strategies